Use case · higgsfield

higgsfield with socialAF.

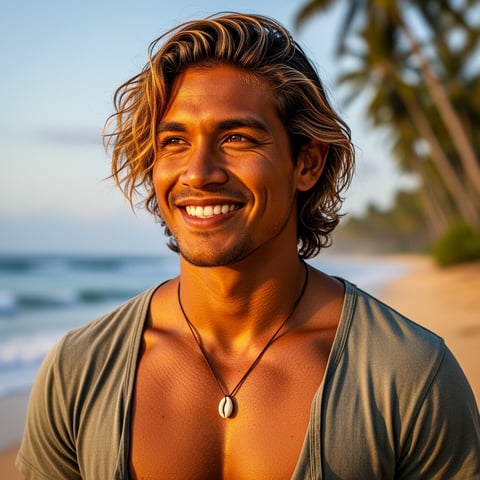

Turn your Higgsfield ideas into a repeatable character content engine with socialAF. If you are creating cinematic short-form videos, AI influencer campaigns, branded personas, UGC-style ads, or story-driven social clips, the hardest part is not generating one good asset. It is keeping the same face, style, voice, wardrobe, and personality consistent across every post. socialAF helps you build that foundation first. Create a reference-driven AI character, generate multi-angle reference packs, clone or design a voice, organize every asset in a per-character gallery, then produce images, videos, talking avatars, and text-to-speech variations without rebuilding your persona from scratch. Modern Higgsfield workflows emphasize camera-controlled, social-first video with moves like crash zooms, crane shots, dolly motion, orbit shots, and image-to-video storytelling, so your character assets need to be clean, directional, and reusable before you animate them. ([higgsfield.ai](https://higgsfield.ai/camera-controls?utm_source=openai)) With socialAF, you can create Higgsfield-ready characters that look intentional in close-ups, product placements, talking-head clips, lifestyle scenes, and campaign sequences. You get the speed of AI generation with the structure agencies and creators need: consistent identities, organized galleries, bulk generation, and multi-org workspaces built for recurring content production.

Get started today·Results in seconds·Loved by creators